Have you ever felt like your social media feed knows exactly what you’re thinking? You scroll through your favorite app, and it seems to show you one post after another that perfectly aligns with your mood, your hobbies, or even your political leanings. In the year 2026, our digital lives are no longer just personalized but are truly designed to match your exact requirements.

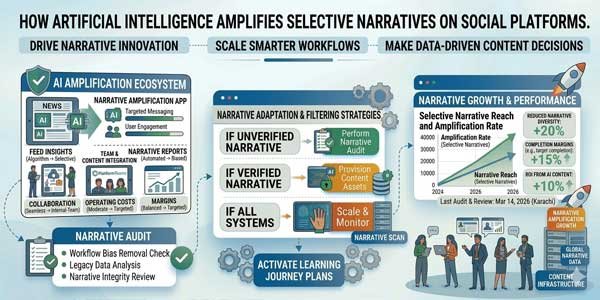

Behind every “Like” button and every “For You” page lies a complex web of AI algorithms. These recommendation engines and ranking systems mainly act as invisible editors. In fact, they precisely decide which stories reach millions and which ones vanish into the digital void. As the tools get smarter, a critical question arises: Who is actually deciding what trends, and what happens when AI starts choosing one side of a story over another?

Table of Contents

How do AI Algorithms Work?

To understand how narratives are shaped, we first need to examine how these algorithms make decisions. At their heart, they are not programmed to seek the “truth” or “balance.” Instead, they are programmed for one key requirement, which is engagement.

- Engagement-Based Ranking: AI considers how many likes, shares, and comments a post receives. If people are reacting too quickly to any post, then AI perceives that content as good content and shows it to even more people.

- Feedback Loops: When someone clicks on a specific type of video, the AI technology literally remembers that preference. Next, it starts showing more of the same, and each time you engage, the “loop” gets tighter, reinforcing your existing interests.

- Virality Bias: Algorithms are built to find the next big thing. This creates a bias toward “loud” content. Simple, catchy, or shocking posts often “win” the race for attention over complicated, quiet, or nuanced discussions.

- Amplification of Selective Narratives: Because AI is designed to maximize time spent on the app, it naturally favors certain types of stories. This is where selective narratives begin to take over our screens.

Emotions Over Logic:

- In the digital world, “outrage” is a currency. Emotional and polarizing content travels much faster than calm, factual reporting. AI tools recognize that a post that makes people angry will get more “angry” reactions and comments. Consequently, the algorithm boosts these heated posts, making them appear far more popular than they actually are in the real world.

- The Burial of Nuance. Complicated issues usually have many sides. However, AI often treats these as less “engaging.” A long, detailed explanation of a policy might get “buried” because users scroll past it. Meanwhile, a 10-second clip that takes a side, even if it’s misleading, gets pushed to the top because it’s easy to consume.

- The Illusion of Consensus When an AI shows you the same narrative over and over, you start to believe that “everyone” thinks that way. This repetition creates a perceived consensus. If your entire feed is filled with one specific viewpoint, it becomes very hard to remember that other opinions even exist.

- Echo Chambers AI recommendations effectively build digital walls around us. By only showing us what we already like, it creates an “echo chamber.” We only hear our own views reflected to us, which makes it easier for selective and sometimes false narratives to grow without challenge.

Real-World Examples: When AI Shapes Reality

We can see the power of these tools in action every day. One of the most common examples is viral misinformation. A fake news story, carefully crafted with AI-generated images or “deepfake” videos, can trigger an algorithm’s engagement sensors. Before human moderators can even flag it, the AI has already shared it with millions of users who are likely to believe it.

Another major reference would be the coordinated hashtag trends. In some cases, the groups use AI tools for generating thousands of posts in one go. The algorithm sees this sudden “engagement” and thinks a real conversation is happening. Further, it pushes that specific hashtag into the “Trending” section, tricking real users into joining a narrative started by machines.

The Role of Platforms:

The most popular social media platforms often claim to be “neutral” spaces where all have an equal right to speak. However, their algorithms are designed choices. Every time an engineer tweaks a ranking system to favor “meaningful social interaction,” they are deciding what kind of content humans should see.

Moderation is another major challenge, as AI tools used for moderation are often inconsistent.

They might ban a harmless post while letting a highly polarizing, selective narrative go viral because it technically “followed the rules” but was designed to spread division. This lack of transparency means users rarely know why they are seeing what they are seeing.

Why AI Is Playing Hero In The Current Scenario?

This isn’t just about what’s on your phone; it’s about the health of our society. When AI amplifies selective narratives, it directly affects public opinion. People may make real-life decisions, like how to vote or how to treat their neighbors, based on a “distorted reality” of reality. The constant stream of polarizing content erodes trust in media. If we can’t agree on basic facts because our AI feeds are telling us different stories, democratic discourse becomes nearly impossible. It stunts our individual critical thinking, as we stop questioning the information that arrives so conveniently on our screens.

Key Takeaways!

AI tools are not inherently evil, but are powerful mirrors that reflect and magnify human behavior. However, unchecked amplifications can prove dangerous as these tools become more central to our lives; we need more transparency from tech companies and more accountability for the narratives they choose to boost. For each reader, the biggest tool is to have complete awareness. When you see a post that gives you an instant feeling of outrage or perfectly fits your worldview, you must ask yourself: “Is this the whole story, or is an algorithm just showing me what it knows I want to see?” The future of a healthy digital world depends on our ability to look past the AI and find the truth for ourselves.

About the Author:

About the Author:

Be the first to write a comment.