After many updates from blogger team, we are continuing sharing the new features of their updates. In our previous post, we discussed about, Custom Permalink, Dynamic Description Tag and Custom 404 Error Page that are ever best updates from blogger staff. Today we will talk about Custom Robots Txt file that is also a updated from blogger because in previous dashboard, we can edit it and it was editable in WordPress so now also available in Blogspot.

In blogger it is known as Custom Robots.txt. You can customize this file according to your choice. Now we will discuss about this and the data in it briefly and also share it pros and cons after editing and what to edit and what to not etc. So let start the tutorial.

Table of Contents

What Is Robots.txt?

Robots.txt is common name of a text file that is uploaded to a Web site’s root directory and linked in the html code of the Web site. The robots.txt file is used to provide instructions about the Web site to Web robots and spiders. Web authors can use robots.txt to keep cooperating Web robots from accessing all or parts of a Web site that you want to keep private.

Where Is Blogger Robots.txt File?

Each blog hosted on blogger have its default robots.txt file which is available on the below link as you can view your own too.

or

www.yourblog.com/robots.txt

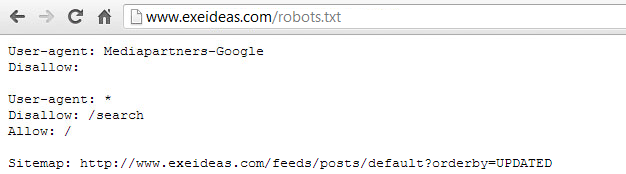

What Is Blogger Robots.txt File?

The default blogger robots file contain the following data that is active and added on every new and old blog but through the new features, you can also customize it easily.

User-agent: Mediapartners-Google

Disallow:

User-agent: *

Disallow: /search

Allow: /

Sitemap: http://example.blogspot.com/feeds/posts/default?orderby=UPDATED

Full Step By Step Explanation:

There are 3 main codes in a robot file so we will try to find out there work one by one in easy way and after that we will learn how to add custom robots.txt file in blogspot blogs.

User-agent: Mediapartners-Google

Disallow:

This code is for Google Adsense robots which help them to serve better ads on your blog. Either you are using Google Adsense on your blog or not simply leave it as it is.

User-agent: *

Disallow: /search

Allow: /

This is the main thing in a robot that works like a guard. It allow SE to enter your site or not. You can also limit the SE where to move in your site or where to not so keep an eye on this because by a single mistake, SE will not visit your site and you can lose your ranking and in other way you cal also allow SE to display your secrets pages on SE results.

There are two codes in this piece. One Disallow and other one is Allow. Disallow simply stops SE spider not to put the mention linked in SE results and blocked the way of SE spiders to crawles that pages. And Allow keep the way clean for SE spiders to see your links and pick them to there search engines results.

1.) Disallow: /search

This is default added code from blogger that will stop SE to view your all links that starts from www.exeideas.blogspot.com/search or www.exeideas.com/search. In this case you can’t show your labels link in SE results that cause low traffic. The question is why blogger block this? They did it due to stop fetching link from your site that peoples search in your site to keep there SE clear and directly transfer there SE visitor to data page.

SE didn’t like this(https://www.exeideas.com/search/?q=seo) type links because as you can see that there comes with /search but this (https://www.exeideas.com/search/label/Blogspot) also contain /search so after disallowing all /search link, you can loose traffic on your blog. As we mentioned above that blogger want to put only data pages to SE so on these link, there are many post not a single.

How To Remove Only Search Pages From SE?

We can also stop SE to crawl only search pages of our site and allow label pages to be on SE results. Just add this (Disallow: /search/?q) code below the above one that will only disallow the search results.

Note: This search URL will only work on Custom SE not on google search engines for blogs.

How To Remove A Post/Page From SE?

We can also stop SE to crawl some desired post or page link by simple adding the below link just under Disallow: /search that will be removed from SE and SE will never crawl them. If you want to disallow a post then you have to add (Disallow: /yyyy/mm/post-url.html) and change the yyy with year of your published post and mm with your post month and the rest of your post url. Consider not to enter domain anywhere in Robots.txt. And if you want to hide a page from SE then add (Disallow: /p/page-url.html) and change page URL only.

2.) Allow: /

This is like the open gate and free invitation for all. It means that a SE can crawl any link on your site freely but except the above mentioned link. So it is the most important code. Every Robot.txt file contain this too. So only / mean that you allow every link in your domain.

Sitemap: http://example.blogspot.com/feeds/posts/default?orderby=UPDATED

This is the code that help the SE to find out the latest post of the blog to crawl them first and fast. Through this code we can guide the web spider to crawl one link where all the links are available to do his job in quick and better way. Our all latest post links will be at the above link automatically and spider will crawl there but with the upper precaution that what to crawl and what to not. By this link, there will be no chance to get any of your post away from SE spider.

This sitemap link will only tell the web crawlers about the recent 25 posts pg your blog. If you have more tan 25 post and you want to increase the number of link in your sitemap then replace default sitemap with below one that we provide. It will work for first unlimited post according to code type except 25 recent posts.

If you have more than 25 published posts in your blog then you can use two, three, four respectively sitemaps added below in which one is default and others is new…

Sitemap: http://example.blogspot.com/feeds/posts/default?orderby=UPDATED

Sitemap: http://example.blogspot.com/feeds/posts/default?orderby=UPDATED

Sitemap: http://example.blogspot.com/atom.xml?redirect=false&start-index=1&max-results=500

Sitemap: http://example.blogspot.com/feeds/posts/default?orderby=UPDATED

Sitemap: http://example.blogspot.com/atom.xml?redirect=false&start-index=1&max-results=500

Sitemap: http://example.blogspot.com/atom.xml?redirect=false&start-index=500&max-results=500

How To Add Custom Robots.txt Tag In Blogger?

1.) Go To Your www.blogger.com

2.) Open Your Desire Blog.

3.) Go To “Setting” Tab.

4.) Click “Search preferences“.

5.) Now Look On “Crawlers and indexing” Heading.

6.) Have A View On “Custom robots.txt“.

6.) Click Blue “Edit” Link Under Upper Heading.

7.) Now Select “Yes” From “No“.

8.) Write Your Desired Robots Text For Your Blog . (Warning! Use with caution. Incorrect use of these features can result in your blog being ignored by search engines.)

9.) Click “Save changes“, Now You Are Done.

10.) Didn’t Get It Then See The Tutorial ScreenShot Below.

Now from the above procedure, you have successfully enabled your custom robot.txt file for your blog.

How To Check Your Robots.txt File?

You can check this file on your blog by adding /robots.txt at last to your blog URL in the browser. Take a look at the below example for demo. Once you visit the robots.txt file URL you will see the entire code which you are using in your custom robots.txt file. See below image.

Final Words:

Here we share complete knowledge on custom robots.txt file in blogger. I tried to make this tutorial effective and simple. But still if you have and doubt or query then feel free to ask me. Think twice before edit it because a single mistake can lead your blog out of SE. So if you are not Pro, leave the default Robots.txt as suggested by blogger official. Like it than share and comment here…

dude i want to ask you that how did you added post widget in horizontal line like in your home page??? how did you split them in 2 post in each line?

You Can Also Make Your Blog In This Style By Just Using Our Code From: How To Add Grid Style Blogger Posts In Blogspot?

thnaks so much bro =))

Welcome Here And Thanks For Liking Our Tutorial…

Excellent Website for blogspot and webpage and website. Dear Muhammad Hassan keep writing to post. Really helpful article for blogger.

Welcome Here And Thanks For Appreciating Me An Leaving Your Views About This Blog. Be With Us To Get More…

“Hello there!

What a very helpful post you got here! I have learned something new today. Anyway, thank you very much for guiding us on how to add custom robots.txt file tag in blogger. I am actually searching for this for almost a week now and I am so happy that I have found your post. I will definitely share this article of yours to others. Thank you!”

Welcome Here And Thanks For Liking Our Article. Be With Us So You Can Get More What We Have.

Hi, thanks for the information. I am using blogger with default Robots.txt, all the links from my Blog have been ranked on Google search results. However, none is ranked on Yahoo search result. Do you know why? From the Bing Webmaster tool, it mentions that the URLs have been blocked by the Robots.txt, but I have not customized the Robots.txt, still the default Robots.txt. Could you please help me to solve this problem. Ranking in Yahoo is also important!!! Many thanks, Michael

In Bing WebMaster Tools, Are They Giving You Any Mentioned Error That’s Why Your Robots.txt File Is Blocking URLs? If You Want Confirmation About Your Robots.txt Then You Can Create A New File From Robots.txt Generator And Then It Will Be Confirmed That Your Robots.txt File Is Not The Problem And Bing WebMaster Tools Is The Problem.

Thanks Bro , Its Work 100 % Now i Have 0 Error In My Sitemap ,

You Are Welcome. Stay With Us To Get More Anytime…

How To Fix Robots txt in Blogger? A better SEO is also needed to get organic traffic from Fix Robots txt in Blogger.

Welcome here and thanks for reading our article and sharing your view.

Yoast SEO is a WordPress plug-in designed to help you improve some of the most important on-page SEO factors–even if you aren’t experienced with Web development and SEO. This plug-in takes care of everything from setting up your meta titles and descriptions to creating a sitemap. Yoast even helps you tackle the more complex tasks like editing your robots.txt and .htaccess.

Some of the settings may seem a little complex if you’re new to SEO and WordPress, but Yoast created a complete tutorial to help you get everything set up. And the team at WPBeginner

Welcome here and thanks for reading our article and sharing your view. This will be very helpful to us to let us motivate to provide you more awesome and valuable content from a different mind. Thanks for reading this article.

Nice article.

I am currectly ad my robots.txt.

but Warning not gone from here.

Welcome here and thanks for reading our article and sharing your view. Whats the warning you have nd what is the problem. Will feel happy to assist you via email support. Just contact us.