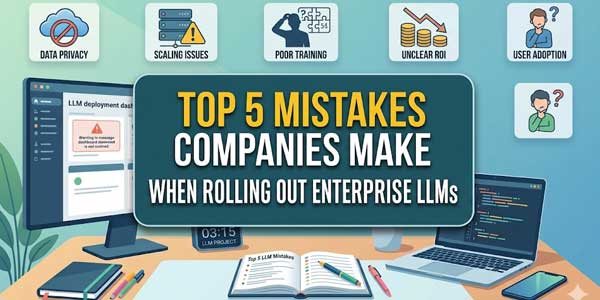

It is not true that the adoption of LLM in business is in its initial stages. Almost all companies have tried using LLMs to some extent and are moving ahead with implementing LLMs.

However, out of this majority, there is a huge number that have failed in their implementation of LLM. And, it is not the case with LLM; it is due to the method adopted for implementing them.

There is one very obvious cause behind the failure of all such LLM implementations.

Table of Contents

1.) Considering LLMs as a Plug-and-Play Solution:

This is one of the major mistakes that almost everyone makes when dealing with LLMs.

Considering LLMs are just another plug-in software in an existing system is totally wrong.

Just connecting APIs along with developing a set of interfaces for input/outputs will not serve the purpose.

This is because:

- LLMs are dynamic technologies

- They do not work based on predetermined rules/logic/reasoning

- But they are heavily reliant on the process of application and the inputs provided.

Failure to which leads to:

- Non-specificity of the output

- Increased variability of the output

- Unexpected results

While implementing LLMs, there are certain things that need consideration.

They include:

- Prompt structure

- Context injection

- System-level instructions

- Output constraints

2.) Undefined Use Case:

Many organizations begin with a general objective.

- “Enhance efficiency through artificial intelligence.”

- “Incorporate AI in operations.”

On the surface, this might seem like an acceptable goal, but it doesn’t lead to anything real.

Large language models function optimally when their intent is clearly defined.

Examples include:

- Automation of certain customer queries

- Support for internal functions through document lookup

- Creation of structured reports from provided data inputs

In the absence of a defined use case:

- No metrics for success

- Lack of optimization objectives

- Results appear inconsistent

This causes uncertainty.

Various teams adopt the model without consensus. There’s no standardized approach to its implementation.

As time goes by, its adoption is hindered because nobody knows precisely what the tool should be doing.

Defining the use case up front solves many issues.

3.) Lack of Attention to the Quality and Context of Data:

It all comes to choosing the right model. No steps are taken to prepare the data.

This brings about the issue.

Enterprises’ LLMs heavily depend on:

- Corporate documentation

- Knowledge bases (K-bases)

- Data on processes

- Historical data

If the data is bad or insufficient, then the result will be bad too.

Thus:

- The answer will be wrong

- Context will be ignored

- The answer will be inaccurate

Most likely, this case is referred to as “hallucination.” Normally, this phenomenon is associated with inadequate data.

LLMs cannot replace K-bases in enterprises but only serve as interpreters of your data.

If there is no reliable data, they will come up with something on their own.

That is where trust breaks.

Improvement of data quality will do more good than the creation of the model itself.

4.) No Rules or Guidelines:

Another major issue is uncontrolled usage.

After the deployment, organizations allow users to use the product without establishing any rules.

Because of this, a lot of problems occur.

Different users:

- Create different prompts

- Expect different results from outputs

- Interpret the results in their own way

There are no clear guidelines.

Furthermore, additional problems occur:

- Exposure of data

- Further abuse of outputs for making some decisions

- Inconsistent reactions to requests from customers

The absence of such a control mechanism leads to unpredictability.

However, guidelines should be provided.

- Usage guidelines

- Cases of application

- Evaluation stages

- Controlled access

This does not mean prohibiting the introduction of AI.

This is an absolute must for ensuring its effectiveness.

5.) High Demand for Quick ROI:

There is a high demand for quick returns from the use of LLMs.

This is a challenge due to the fact that organizations consider the application of LLMs as a short-term strategy, hence expecting quick ROI.

It rarely happens.

The performance of LLMs improves through:

- Iterative processes

- Feedback

- Tuning

Initially, the organization experiences:

- Trial and error approaches

- Identifying failure points

- Adjustments to workflow

This takes time.

The initial outputs are not ideal but improve over time as the LLM undergoes iterative development.

Therefore, when organizations expect quick ROI from LLMs:

- Patience reduces

- Investment is reduced

- Projects are aborted early

FAQs:

Why is it that the majority of LLMs created by organizations do not work?

That is because the emphasis lies more on the model rather than its application, data, and structure.

Is it necessary for organizations to create their own model?

In most scenarios, no, since the existing models suffice.

Why is data necessary?

This is crucial since the outputs become unreliable without data.

Are LLMs applicable to all departments in organizations?

Yes, but only if there are clear use cases.

How much time does it take to get results?

Results take some time to appear.

Final Thoughts:

The LLM solution for the enterprise is not flawed by technology. The way it was done is what makes it bad. The main problems with the tool are the lack of a framework and the need for immediate results. But when businesses start to pay attention to the right use cases, data, and limited applications, and align with tech recruitment agencies that understand how to source talent for AI-driven roles, the LLM starts to show real results.

About the Author:

About the Author:

Be the first to write a comment.